this post was submitted on 05 Apr 2025

46 points (97.9% liked)

LocalLLaMA

2884 readers

6 users here now

Welcome to LocalLLaMA! Here we discuss running and developing machine learning models at home. Lets explore cutting edge open source neural network technology together.

Get support from the community! Ask questions, share prompts, discuss benchmarks, get hyped at the latest and greatest model releases! Enjoy talking about our awesome hobby.

As ambassadors of the self-hosting machine learning community, we strive to support each other and share our enthusiasm in a positive constructive way.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

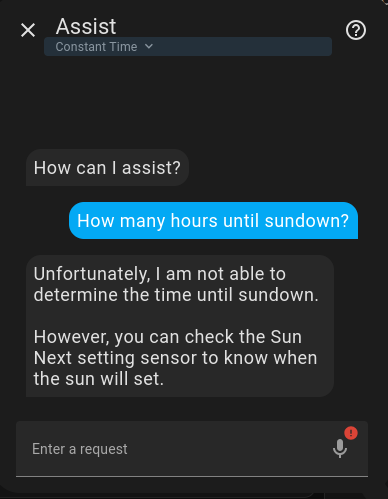

You may need to give your Assistant some tools.

Things like weather and time require the LLM to be able to fetch external data.

Yeah, how does this work? Do I need to setup the tools in home assistant or do I need setup tools with system prompt / modelfile on the ollama server? The ollama integration says the AI instructions use homeassiant templating. Do I need to create a custom template prompt that passes the info to the ollama server in way that works with the tools? https://www.home-assistant.io/docs/configuration/templating/

MY bad, I did not catch this was home assistant... I get the feeling that should be taken care of for you based on this

https://www.home-assistant.io/integrations/ollama/

Sorry to have to tell you... I don't know :(