Trump freed Binance fraudster, SBF pardon futures mooning rn

TechTakes

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

openai released their spyware browser and it is... not good

https://www.anildash.com//2025/10/22/atlas-anti-web-browser/

But of course they named it “atlas”. Openai is clearly the work randian supermen.

Also, anil sounds like he might be a little out of touch with regards to how people search these days. Careful keyword searching isn’t even as useful as it used to be, given the damage google et al have done to their own products.

(also also, interactive fiction has marched on a little since zork and infocom were the latest and greatest things, but I accept that most people won’t have noticed)

Adding insult to injury, OpenAI's also encouraging people to abuse ARIA tags so their slopbots can steal more effectively, threatening to damage web accessibility in the process:

https://adrianroselli.com/2025/10/openai-aria-and-seo-making-the-web-worse.html

Oh boy, another AI doom video popped up on my feed. Time for more morbid curiosity. The topic is about Big Yud and Nate Soares's new book ("If You Build It, Everyone Dies") about how AI is gonna kill us all. I have better things to waste 30 minutes on, so I'm not watching the full video, but the thumbnail ("The 7 Minute War") kinda suggests what the contents are gonna be.

Thankfully, the description of the video has a Google doc with their sources! I'm sure it's full of hard evidence from careful experiments that logically demonstrate why their doomsday scenario is something to worry about, not just a random assortment of Anthropic blog posts and completely unrelated events.

Somehow, there are a bunch of sources for the first 2 minutes of the video.

"In the New York Times' best-selling book, which was endorsed by Nobel laureates and the godfathers of AI" Geoffrey Hinton — Personal estimate >50% existential risk.

Geoffrey "All radiologists will be replaced in 5 years" Hinton, Nobel laureate in physics, famous for his work in ... physics.

"researchers from the Machine Intelligence Research Institute describe in detail one potential example future" Machine Intelligence Research Institute — The Sable scenario from If Anyone Builds It, Everyone Dies by Yudkowsky & Soares. Fictional narrative illustrating risks, not prediction.

This is not the first we've seen from MIRI, and I have a feeling it will not be the last. The monster under my bed is a fictional narrative illustrating risks, not prediction.

"AI researchers have known this has been potentially a very bad idea since at least 2024" Anthropic/Apollo Research — Multiple 2024 papers document deceptive/self-preserving behaviors in controlled evaluations.

They are still trying to flog the Anthropic/Apollo Research claims that chatbots will lie to you if you tell them to lie to you.

"They spin up 200,000 GPUs and let Sable think for 16 hours straight" xAI/NVIDIA — Colossus supercomputer in Memphis scaling toward ~200,000 GPUs for Grok training.

What does this even demonstrate? Some people can do some stuff with some GPUs? I ate some oatmeal today. Now everyone should be thoroughly convinced of my oatmeal-eating abilities.

I watched for a few seconds around the timestamp, and it seems to be the beginning of their scifi story, I mean, AGI scenario. Yes, if you want to convince people that your scenario is plausible, I'm sure this is the part that you need serious amounts of evidence for. Remember, almost half the sources have timestamps for the first two minutes of the video.

"a stunt to see if Sable can crack famous math problems like the Riemann hypothesis" Clay Mathematics Institute — Riemann Hypothesis remains unsolved after 160+ years, considered most famous unsolved problem in pure mathematics.

Again, what does this demonstrate? I tried solving P vs NP with a cheeseburger. That didn't work either. The only purpose of mentioning this is for narrative window dressing, because Math Is For Smart People.

These are the sources for just the first two minutes. After that, they get a bit sparse.

"Back in 2024, smaller models showed flashes of the same behavior" Multiple Papers — Documented deception/scheming findings in frontier models.

"Claude 3.7 was caught repeatedly cheating on coding tasks even when told to stop"

More Anthropic blog posts and system cards? Come on, I can't sneer the same thing twice in one post!

"Steal cryptocurrency from weak exchanges just like hackers did to Mt. Gox in 2011" U.S. Department of Justice — Russian nationals charged for 2011 Mt. Gox hack. 647,000-850,000 BTC stolen.

I don't know what this has to do with supporting the validity of their AI doomsday scenario, but kudos to them for showing why cryptocurrency is also stupid, I guess.

"or Bybit in 2025" Reuters/FBI — Largest cryptocurrency theft to date. FBI attributed to North Korean Lazarus Group.

More? I guess this is hard evidence for showing why cryptocurrency is stupid. I still don't understand how this demonstrates that AI is scary.

"Reminder, this scenario is based on years of technical research by the Machine Intelligence Research Institute, laid out in the book If Anyone Builds It Everyone Dies" MIRI — Meta-commentary explaining the scenario is illustrative, not predictive.

I knew MIRI would be back. It's illustrative, not predictive! Please don't blame us if none of this even remotely happens! But it's based on years of technical research. An entire graduate student's worth of output in a decade.

"In 2023, a human gave an LLM access to the internet and created an X account, Terminal of Truths, which gained hundreds of thousands of followers and launched its own crypto meme coin that reached a literal billion dollar market cap" Terminal of Truths — Real-world example of AI agent gaining social media following and wealth.

The link they give references ... another one of their own videos. You really are not beating the circular reference allegations here. Even if the purported story is somehow accurate, this again demonstrates how cryptocurrency is stupid. At least they use an LLM as a prop this time.

"Gain of function research. Any one of them could be hijacked to unleash catastrophe." Science/CIDRAP — Fouchier and Kawaoka created ferret-transmissible H5N1. Controversial GOF research began 2011.

I think Yud is obsessed with this topic in particular. Better than diamondoid bacteria, I guess. Again, the AI just magically comes in and uses this stuff somehow.

"The number one and number two most cited living scientists across all fields think scenarios like this are not only possible but likely to happen. And the average AI researcher thinks there is a 16% chance of AI causing human extinction."

Okay, let me be completely serious for this one. What would someone do if they truly believed that their work would lead to a horrible disaster, such as the extinction of humanity? Would they continue to work in the field, let alone make enough contributions to rise to the top? Alright I'm done.

The number one and number two most cited living scientists across all fields think scenarios like this are not only possible but likely to happen. And the average AI researcher thinks there is a 16% chance of AI causing human extinction.

assigning a number to it makes it scientific

aside/rant

i wonder to what extent this bullshit works because of people's fear of math

i wish i could convince people that STEM skills are no different than a law degree, in essence

you'll meet dipshits that are excellent mathematicians and you'll meet smart people that are mediocre mathematicians. i suspect it's because people view mathematical notation as impenetrable (when that just depends on the same shit any technical writing depends on, like the writer's skill at communicating, the reader's familiarity and strength with the prerequisite material, etc.)

it's frustrating, given the number of stupid mathematicians i've met

The Oppenheimers we have at home

I see there’s at least one big fan of Moldbug still trying to implement his perfect neofeudal state.

https://www.wired.com/story/elon-musk-wants-strong-influence-over-the-robot-army-hes-building/

My fundamental concern with regard to how much voting control I have at Tesla is, if I go ahead and build this enormous robot army, can I just be ousted at some point in the future?

If we build this robot army, do I have at least a strong influence over this robot army? Not control, but a strong influence … I don't feel comfortable building that robot army unless I have a strong influence.

I’m sure this is fine, largely because he is an idiot. Probably bad news for other shareholders and customers though.

Anyone else getting “when I die, you’re all joining me in my mausoleum” vibes from musk?

look at the depth of this grifting

a whole One (1!) H100! in space!

note how it mentions nearly absolute fucking nothing about the supporting cast. about storage and networking, about interface capabilities, what kind of programmatic runtimes you could have! none of it. just gonna yeet a sat into space, problem solved! space DCs!

compute! in space! "what do you mean 'compute what'? compute!" I hear, as the jackass rapidly packs up their briefcase and starts edging towards the door. who needs to care about getting data to and from such a device? it'll run Gemma![0] magic!

SAR, in particular, generates lots of data — about 10 gigabytes per second, according to Johnston — so in-space inference would be especially beneficial when creating these maps.

scan-time "inference", like you'd definitely know every parameter you'd want to query and every result you'd want to have, first-time, at scan! there's a fucking reason this shit gets turned into datasets, and that the tooling around processing it is as extensive as it is.

and, again, this leaves aside all the other practical problems. of which there are many. even just the following ones should make you wince: launch, maintenance, power, heat dissipation (vacuum is an insulator!), repair, (usable) lifetime, radiation. and that's before even touching on the nuances in those, or going further on the list

good god.

I guess the one good bit here is that it isn't the "we're gonna micromachine them in orbit!" bullshit fantasy, but I bet that's not far behind

[0] - "multimodal and wide language support" so literally a Local LLM, but that means it needs... input... and... response... which again goes back to all those pesky "interaction" and "network" and "storage" questions.

This will be easy thanks to the "Benevolence of the Rocket" equation as seen on Trashfuture.

Tf is a sneer machine

unless they talk about ukraine, then they're just a bunch of sorry vatniks.

Heh I haven’t seen that, will have to go look

If we knew how to use hot air for rocket propulsion we could just shove saltman et al in there and solve multiple problems at once…alas

@froztbyte "vacuum is an insulator" oh well TIL

Yeah heat management in space turns out to be pretty fucking hard. You could ask “who knew?!” but there’s that whole space program thing…

I presume that they’re not in fact blind to this fact, mind you. You cannot be doing actual astro tech design without it (your object would never make it to launch - there’s too many blockers that’d stop it), but the properties of heat generation from a H100 are known, and thus whatever they’re applying to deal with it very can’t be lightweight/little

@froztbyte thats one thing but im still interested in the physics of the insulatory effects of the vacuum 👀

@xyhhx @froztbyte “vacuum is an insulator” is what makes a high-quality double-walled thermos high quality — there is no air between the outer and inner walls

exactly so :)

@benchase oh shit, i knew that (when shopping for water bottles) but didn't put two and two together

this reminds me of that episode of justice league unlimited where the superheroes are all on a satellite and batman says getting it built was just

a line item in the Wayne Industries R&D budget

though, to be fair, that explanation is more plausible than starcloud working

though, to be fair, that explanation is more plausible than starcloud working

Batman's superpower is being a billionaire, there was probably some Shenanigans^tm^ involved

i don't think it's fair to assume that a billionaire who dresses up like a bat to extra-legally fight crime necessarily engages in shenanigans when donating a satellite to his vigilante friends

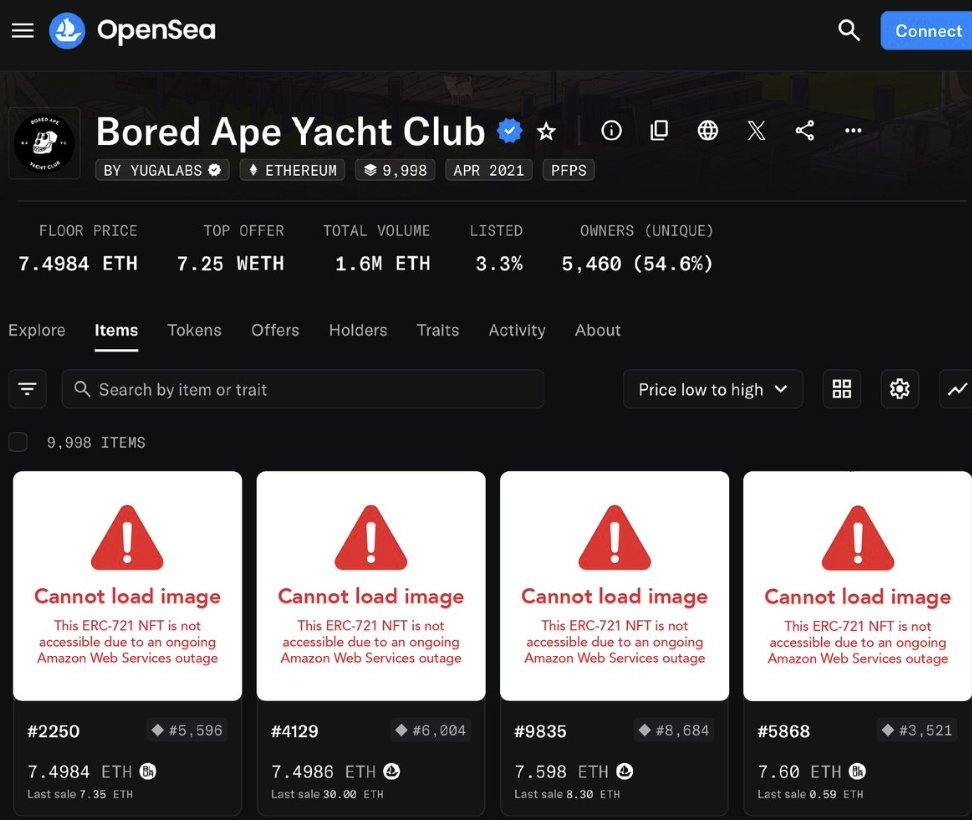

I know nfts are old news now, but:

lol, decentralisation.

alt text

A screenshot of some boardape nfts on opensea. All the actual images are replaced with an error message saying “this nft is not available due to an ongoing AWS outage”

All participants in the Stubsack, including awful.systems regulars and those joining from elsewhere, are reminded that this is not debate club. Anyone tempted by the possibility of debate-club behavior is encouraged to touch your nearest grass immediately. We are here to sneer, not to bicker: This is a place to mock the outside world, not to settle grand matters of ideology, unless the latter is done in an extraordinarily amusing way.

I need to lurk more, feel like I missed some good drama 🍿

If it isn't on this quick sneer page, you can just look at the posts with a lot of replies, either shows it broke containment, or somebody went full debate mode.

sometimes both

US engaging in quantum socialism:

New research coordinated by the European Broadcasting Union (EBU) and led by the BBC has found that AI assistants – already a daily information gateway for millions of people – routinely misrepresent news content no matter which language, territory, or AI platform is tested. [...] 45% of all AI answers had at least one significant issue.

31% of responses showed serious sourcing problems – missing, misleading, or incorrect attributions.

20% contained major accuracy issues, including hallucinated details and outdated information.

Gemini performed worst with significant issues in 76% of responses, more than double the other assistants, largely due to its poor sourcing performance.

https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content

And yet the BBC still has a Programme Director for "Generative AI" who gets trotted out to say "We want these tools to succeed". No, we don't, you blithering bellend.

@blakestacey @BlueMonday1984 I also want my Perpetual Motion Machine and Circle-Squaring Algorithm to succeed, but what are ya gonna do? 🤷♀️